Ethical AI project declares war on deepfakes

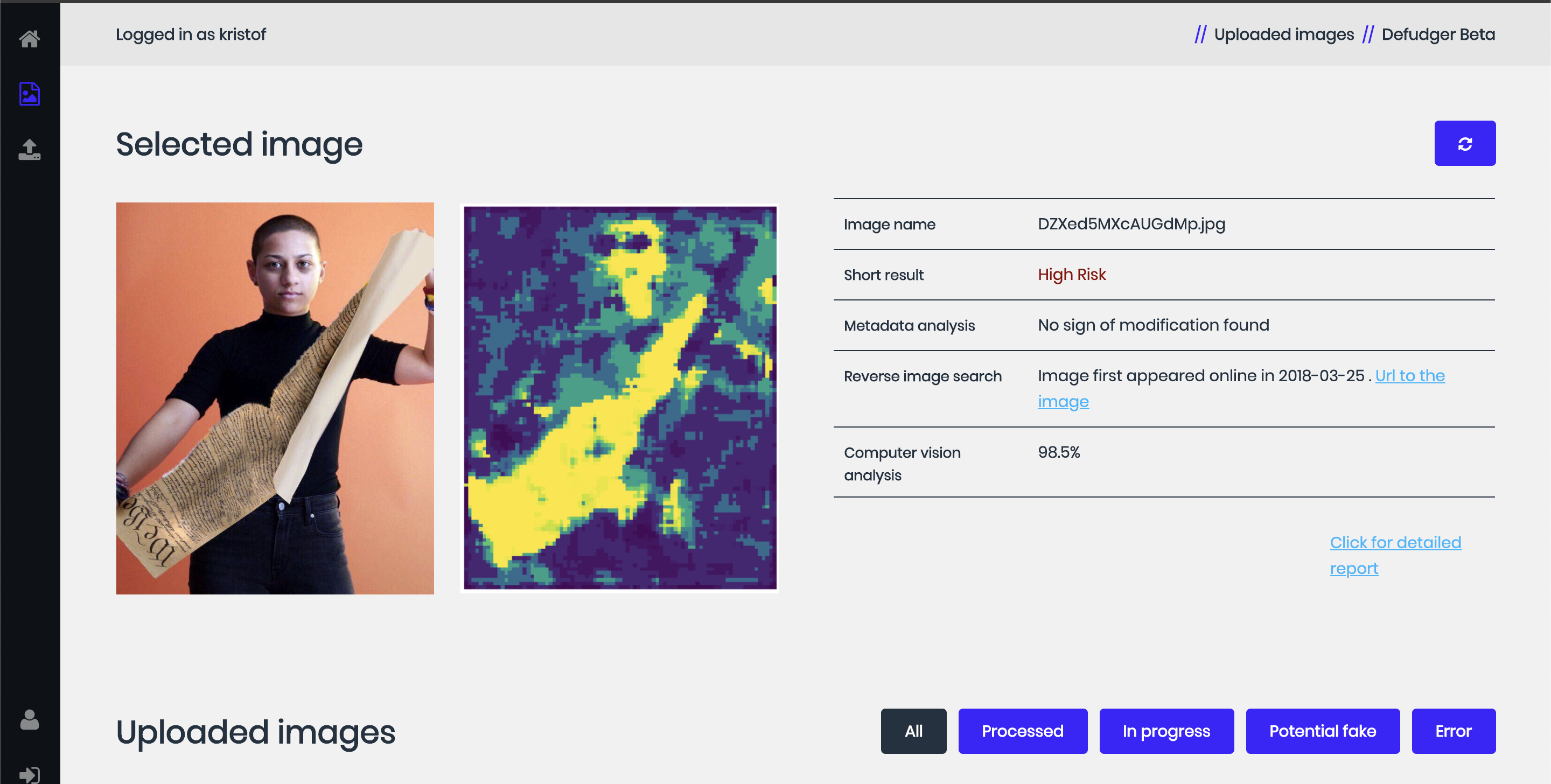

It has become increasingly tricky to identify when an image is real, and when it is fake. Defudger, based at DTU, is building a tool to help media companies automate the detection and verification of fake visual content

Most people know that you can’t believe everything you read on the internet, but increasingly you can’t even trust what you see in pictures or videos.

It is becoming easier and faster to manipulate images, photos, and other visual material, and in parallel, it has also become more challenging to detect forgery.

In the past, it was a cumbersome and challenging process to alter videos, and even modifying ordinary photographs required skill. Not anymore, however, as these days computers have become more powerful, and editing tools more comfortable to use. Inserting an object into a video or putting someone’s face on another’s has become easier than ever before.

Fake photos on the rise

Media fakes are a problem on the rise. The technique is a temptation for religious fanatics and political manipulators, as well as a way to fabricate false evidence for insurance purposes or in court.

CEO of Defudger, Kristof Szabo believes this problem can be solved, stating that “We are developing a tool that uses AI to reveal where and how images and videos have been manipulated. Some of it is computer vision-based, but the main part is training algorithms that learn and get better with time. We are targeting the media industry that needs a way to reveal fake images and videos. The problem gets worse and worse. In the past, you had to be a graphic artist to make good fakes. But in the future, it can be automated, and detection will become increasingly difficult for the media industry in particular.”

The company originated from DTU Skylab Digital, a lab where the Technical University of Denmark (DTU) has created a playground for students, researchers, and investors to develop and test ideas, and receive assistance in creating real companies.

Ethics in everything

The University has a stated goal of developing students and businesses that work to support their core value of creating technology that benefits people and society. Skylab Digital focuses on blockchain, IoT and AI. Here, students must think about ethics in their projects right from the very beginning.

This intense focus on ethical technology is not a new area of research. Still, as AI spreads into our daily lives and emerges in new areas, ethical considerations only become more relevant.

Some weeks ago, European Commissioner Margrethe Vestager announced an EU white paper outlining how the EU can speed up progress in AI while managing technological development so that innovations are successful, rather than alienating.

Ethics are, however, not a new phenomenon for Defudger, as their solution was conceived from an ethical perspective, making the company one of DTU’s success stories.

“Our product is a good example of what you can do with technology. A gap is emerging that can damage democracy because it is too difficult to see through false information. Here we want to help the media industry by offering a product that makes the game more even. Soon, fake video images can be created in a few minutes, and we have to prepare for that reality,” says Szabo.

Fraud and scams

Defudger’s basic idea of detecting forgeries using AI has potential applications in, for example, insurance fraud or social media companies looking to remove fake news from users’ feeds.

For Defudger, this ethical dimension isn’t just about creating a product that serves a useful purpose, but it’s also about preventing others from using it in the wrong way.

That’s why the company’s developers keep Defudger’s AI algorithms a closely held secret, as this information could, at worst, be used to create better deep fakes.

“There is an ethical dilemma in that our product can be used like this, but we try to be transparent about what this technology is capable of, and we try to educate people on how they can be affected by fakes. We work to find a business model where we can collaborate with media and fact-checking companies. We start small, but we also know that our AI can potentially find.“